Unix system programming in OCamlXavier Leroy and Didier Rémy1stDecember

, 2014 |

© 1991, 1992, 2003, 2004, 2005, 2006, 2008, 2009, 2010

Xavier Leroy and Didier Rémy,

inria Rocquencourt.

Rights reserved.

Consult the

license.

Translation by

Daniel C. Bünzli,

Eric Cooper,

Eliot Handelman,

Priya Hattiangdi,

Thad Meyer,

Prashanth Mundkur,

Richard Paradies,

Till Varoquaux,

Mark Wong-VanHaren

Proofread by

David Allsopp,

Erik de Castro Lopo,

John Clements,

Anil Madhavapeddy,

Prashanth Mundkur

Translation coordination & layout by Daniel C. Bünzli.

Please send corrections to daniel.buenzl i@erratique.ch.

Available as a monolithic file,

by chapters, and in PDF

—

git repository.

This document is an introductory course on Unix system programming,

with an emphasis on communications between processes. The main novelty

of this work is the use of the OCaml language, a dialect of the

ML language, instead of the C language that is customary in systems

programming. This gives an unusual perspective on systems programming

and on the ML language.

Contents

Introduction

These course notes originate from a system programming course Xavier

Leroy taught in 1994 to the first year students of the Master’s program in

fundamental and applied mathematics and computer science at the École

Normale Supérieure. This earliest version used the

Caml-Light [1] language.

For a Master’s course in computer science at the École Polytechnique

taught from 2003 to 2006, Didier Rémy adapted the notes to use the

OCaml language. During these years, Gilles Roussel, Fabrice Le

Fessant and Maxence Guesdon helped to teach the course and also

contributed to this document. The new version also brought

additions and updates. In ten years, some orders of magnitude have

shifted by a digit and the web has left its infancy. For instance, the

http relay example, now commonplace, may have been a forerunner in

1994. But, most of all, the OCaml language gained maturity and was

used to program real system applications like Unison [18].

Tradition dictates that Unix system programming must be done in C. For

this course we found it more interesting to use a higher-level

language, namely OCaml, to explain the fundamentals of Unix system

programming.

The OCaml interface to Unix system calls is more abstract. Instead

of encoding everything in terms of integers and bit fields as in C,

OCaml uses the whole power of the ML type system to clearly

represent the arguments and return values of system calls. Hence, it

becomes easier to explain the semantics of the calls instead of losing

oneself explaining how the arguments and the results have to be

en/decoded. (See, for example, the presentation of the system call

wait, page ??.)

Furthermore, due to the static type system and the clarity of its

primitives, it is safer to program in OCaml than in C. The

experienced C programmer may see these benefits as useless luxury,

however they are crucial for the inexperienced audience of this course.

A second goal of this exposition of system programming is to show

OCaml performing in a domain out of its usual applications in

theorem proving, compilation and symbolic computation. The outcome of

the experiment is rather positive, thanks to OCaml’s solid

imperative kernel and its other novel aspects like parametric

polymorphism, higher-order functions and exceptions. It also shows

that instead of applicative and imperative programming being mutually

exclusive, their combination makes it possible to integrate in the

same program complex symbolic computations and a good interface with

the operating system.

These notes assume the reader is familiar with OCaml and Unix shell

commands. For any question about the language, consult the OCaml

System documentation [2] and for questions about Unix,

read section 1 of the Unix manual or introductory books on Unix

like [5, 6].

This document describes only the programmatic interface to the Unix

system. It presents neither its implementation, neither its internal

architecture. The internal architecture of bsd 4.3 is

described in [8] and of System v

in [9]. Tanenbaum’s books [13, 14] give an overall view of

network and operating system architecture.

The Unix interface presented in this document is part of the

OCaml System available as free software at

http://caml.inria.fr/ocaml/.

1 Generalities

1.1 Modules Sys and Unix

Functions that give access to the system from OCaml are grouped into two

modules. The first module, Sys, contains those functions

common to Unix and other operating systems under which OCaml runs.

The second module, Unix, contains everything specific to

Unix.

In what follows, we will refer to identifiers from the Sys and

Unix modules without specifying which modules they come from. That is, we

will suppose that we are within the scope of the directives

open Sys and open Unix. In complete examples, we explicitly write

open, in order to be truly complete.

The Sys and Unix modules can redefine certain

identifiers of the Pervasives module, hiding previous

definitions. For example, Pervasives.stdin is different from

Unix.stdin. The previous definitions can always be obtained

through a prefix.

To compile an OCaml program that uses the

Unix library, do this:

ocamlc -o prog unix.cma mod1.ml mod2.ml mod3.ml

where the program prog is assumed to comprise of the three modules mod1,

mod2 and mod3. The modules can also be compiled separately:

ocamlc -c mod1.ml

ocamlc -c mod2.ml

ocamlc -c mod3.ml

and linked with:

ocamlc -o prog unix.cma mod1.cmo mod2.cmo mod3.cmo

In both cases, the argument unix.cma is the Unix library

written in OCaml. To use the native-code compiler rather than the

bytecode compiler, replace ocamlc with ocamlopt and

unix.cma with unix.cmxa.

If the compilation tool ocamlbuild is used, simply add the

following line to the

_tags file:

<prog.{native,byte}> : use_unix

The Unix system can also be accessed from the interactive system,

also known as the “toplevel”. If your platform supports dynamic

linking of C libraries, start an ocaml toplevel and type in the

directive:

#load "unix.cma";;

Otherwise, you will need to create an interactive system containing

the pre-loaded system functions:

ocamlmktop -o ocamlunix unix.cma

This toplevel can be started by:

./ocamlunix

1.2 Interface with the calling program

When running a program from a shell (command interpreter), the shell

passes arguments and an environment to the program. The

arguments are words on the command line that follow the name of the

command. The environment is a set of strings of the form

variable=value, representing the global bindings of environment

variables: bindings set with setenv var=val for the

csh shell, or with var=val; export var for

the sh shell.

The arguments passed to the program are in the string array

Sys.argv:

The environment of the program is obtained by the function

Unix.environment:

A more convenient way of looking up the environment is to use the

function Sys.getenv:

Sys.getenv v returns the value associated with the variable name

v in

the environment, raising the exception Not_found if this

variable is not bound.

Example

As a first example, here is the echo program, which prints a

list of its arguments, as does the Unix command of the same name.

let echo () =

let len = Array.length Sys.argv in

if len > 1 then

begin

print_string Sys.argv.(1);

for i = 2 to len - 1 do

print_char ' ';

print_string Sys.argv.(i);

done;

print_newline ();

end;;

echo ();;

* * *

A program can be terminated at any point with a call to exit:

The argument is the return code to send back to the calling program. The

convention is to return 0 if all has gone well, and to return a

non-zero code to signal an error. In conditional constructions, the

sh shell interprets the return code 0 as the boolean

“true”, and all non-zero codes as the boolean “false”.

When a program terminates normally after executing all of the

expressions of which it is composed, it makes an implicit call to

exit 0. When a program terminates prematurely because an

exception was raised but not caught, it makes an implicit call to

exit 2.

The function exit always flushes the buffers of all channels open for

writing. The function at_exit lets one register other actions

to be carried out when the program terminates.

val at_exit : (unit -> unit) -> unit

The last function to be registered is called first. A function registered with

at_exit cannot be unregistered. However, this is not a

real restriction: we can easily get the same effect with a function

whose execution depends on a global variable.

1.3 Error handling

Unless otherwise indicated, all functions in the Unix module

raise the exception Unix_error in case of error.

The second argument of the Unix_error exception is the name of

the system call that raised the error. The third argument identifies,

if possible, the object on which the error occurred; for example, in

the case of a system call taking a file name as an argument, this file name will be

in the third position in Unix_error. Finally, the first argument

of the exception is an error code indicating the nature of the

error. It belongs to the variant type error:

type error = E2BIG | EACCES | EAGAIN | ... | EUNKNOWNERR

of int

Constructors of this type have the same names and meanings as those

used in the posix convention and certain errors from

unix98 and bsd. All other errors use the constructor EUNKOWNERR.

Given the semantics of exceptions, an error that is not specifically

foreseen and intercepted by a try propagates up to the top of a

program and causes it to terminate prematurely. In small

applications, treating unforeseen errors as fatal is a good practice.

However, it is appropriate to display the error clearly. To do this,

the Unix module supplies the handle_unix_error function:

The call handle_unix_error f x applies function f to the

argument x. If this raises the exception Unix_error, a

message is displayed describing the error, and the program is

terminated with exit 2. A typical use is

handle_unix_error prog ();;

where the function prog : unit -> unit executes the body of the

program. For reference, here is how handle_unix_error is

implemented.

1 open Unix;;

2 let handle_unix_error f arg =

3 try

4 f arg

5 with Unix_error(err, fun_name, arg) ->

6 prerr_string Sys.argv.(0);

7 prerr_string ": \"";

8 prerr_string fun_name;

9 prerr_string "\" failed";

10 if String.length arg > 0

then begin

11 prerr_string " on \"";

12 prerr_string arg;

13 prerr_string "\""

14 end;

15 prerr_string ": ";

16 prerr_endline (error_message err);

17 exit 2;;

Functions of the form prerr_xxx are like the functions

print_xxx, except that they write on the error channel

stderr rather than on the standard output channel stdout.

The primitive error_message, of type

error -> string, returns a message describing the error given as an

argument (line 16). The argument number zero of the

program, namely Sys.argv.(0), contains the name of the command

that was used to invoke the program (line 6).

The function handle_unix_error handles fatal errors, i.e. errors

that stop the program. An advantage of OCaml is that it requires

all errors to be handled, if only at the highest level by

halting the program. Indeed, any error in a system call raises an

exception, and the execution thread in progress is interrupted up to

the level where the exception is explicitly caught and handled. This avoids

continuing the program in an inconsistent state.

Errors of type Unix_error can, of course, be

selectively matched. We will often see the following

function later on:

let rec restart_on_EINTR f x =

try f x with Unix_error (EINTR, _, _) -> restart_on_EINTR f x

which is used to execute a function and to restart it automatically

when it executes a system call that is interrupted (see section 4.5).

1.4 Library functions

As we will see throughout the examples, system programming often

repeats the same patterns. To reduce the code of each application to

its essentials, we will want to define library functions that

factor out the common parts.

Whereas in a complete program one knows precisely which errors can be

raised (and these are often fatal, resulting in the program being stopped),

we generally do not know the execution context in the case of library functions. We

cannot suppose that all errors are fatal. It is therefore necessary to

let the error return to the caller, which will decide on a suitable

course of action (e.g. stop the program, or handle or ignore the error). However,

the library function in general will not allow the error to simply pass

through, since it must maintain the system in a consistent state. For

example, a library function that opens a file and then applies an

operation to its file descriptor must take care to close the

descriptor in all cases, including those where the processing of the

file causes an error. This is in order to avoid a file descriptor

leak, leading to the exhaustion of file descriptors.

Furthermore, the operation applied to a file may be defined by a

function that was received as an argument, and we don’t know precisely

when or how it can fail (but the caller in general will know). We are

thus often led to protect the body of the processing with

“finalization” code, which must be executed just before the

function returns, whether normally or exceptionally.

There is no built-in finalize construct try …finalize in

the OCaml language, but it can be easily defined1:

let try_finalize f x finally y =

let res = try f x with exn -> finally y; raise exn in

finally y;

res

This function takes the main body f and the finalizer

finally, each in the form of a function, and two parameters x

and y, which are passed to their respective functions. The body

of the program f x is executed first, and its result is kept

aside to be returned after the execution of the finalizer

finally. In case the program fails, i.e. raises an exception exn,

the finalizer is run and the exception exn is raised

again. If both the main function and the finalizer fail, the

finalizer’s exception is raised (one could choose to have the main

function’s exception raised instead).

Note

In the rest of this course, we use an auxiliary library Misc

which contains several useful functions like try_finalize that are often

used in the examples. We will introduce them as they are needed. To

compile the examples of the course, the definitions of the Misc

module need to be collected and compiled.

The Misc module also contains certain functions, added for

illustration purposes, that will not be used in the course. These

simply enrich the Unix library, sometimes by redefining the

behavior of certain functions. The Misc module must thus take

precedence over the Unix module.

Examples

The course provides numerous examples. They can be compiled with

OCaml, version 4.01.0. Some programs will have to be slightly modified in order to work with

older versions.

There are two kinds of examples: “library functions” (very

general functions that can be reused) and small applications. It is

important to distinguish between the two. In the case of library functions, we

want their context of use to be as general as possible. We will thus

carefully specify their interface and attentively treat all

particular cases. In the case of small applications, an error is often

fatal and causes the program to stop executing. It is sufficient to report

the cause of an error, without needing to return to a consistent state, since

the program is stopped immediately thereafter.

2 Files

The term “file” in Unix covers several types of objects:

-

standard files: finite sets of bytes containing text or binary

information, often referred to as “ordinary” files,

- directories,

- symbolic links,

- special files (devices), which primarily provide access

to computer peripherals,

- named pipes,

- sockets named in the Unix domain.

The file concept includes both the data contained in the file and

information about the file itself (also called meta-data) like its

type, its access rights, the latest access times, etc.

2.1 The file system

To a first approximation, the file system can be considered to be a tree. The root is

represented by '/'. The branches are labeled by (file) names,

which are strings of any characters excluding '\000' and '/'

(but it is good practice to also avoid non-printing characters and

spaces). The non-terminal nodes are directories: these nodes

always contain two branches . and .. which respectively

represent the directory itself and the directory’s parent. The other

nodes are sometimes called files, as opposed to directories,

but this is ambiguous, as we can also designate any node as a

“file”. To avoid all ambiguity we refer to them as

non-directory files.

The nodes of the tree are addressed by paths. If the start of the path

is the root of the file hierarchy, the path is absolute, whereas if the

start is a directory it is relative. More precisely, a relative

path is a string of file names separated by the character

'/'. An absolute path is a relative path preceded by the

the character '/' (note the double use of this character both as

a separator and as the name of the root node).

The Filename module handles paths in a portable

manner. In particular, concat concatenates paths without

referring to the character '/', allowing the code to function equally

well on other operating systems (for example, the path separator character

under Windows is '\'). Similarly, the Filename module

provides the string values current_dir_name and

parent_dir_name to represent the branches

. and .. The functions basename and

dirname return the prefix d and the suffix

b from a path p such that the paths p and

d/b refer to the same file, where d is the directory in

which the file is found and b is the name of the file. The

functions defined in Filename operate only on paths,

independently of their actual existence within the file hierarchy.

In fact, strictly speaking, the file hierarchy is not a tree. First

the directories . and .. allow a directory to refer to

itself and to move up in the hierarchy to define paths leading from a

directory to itself. Moreover, non-directory files can have many

parents (we say that they have many hard links). Finally,

there are also symbolic links which can be seen as

non-directory files containing a path. Conceptually, this path can be

obtained by reading the contents of the symbolic link like an ordinary

file. Whenever a symbolic link occurs in the middle of a path we have

to follow its path transparently. If s is a symbolic link whose

value is the path l, then the path p/s/q represents the file

l/q if l is an absolute path or the file p/l/q if

l is a relative path.

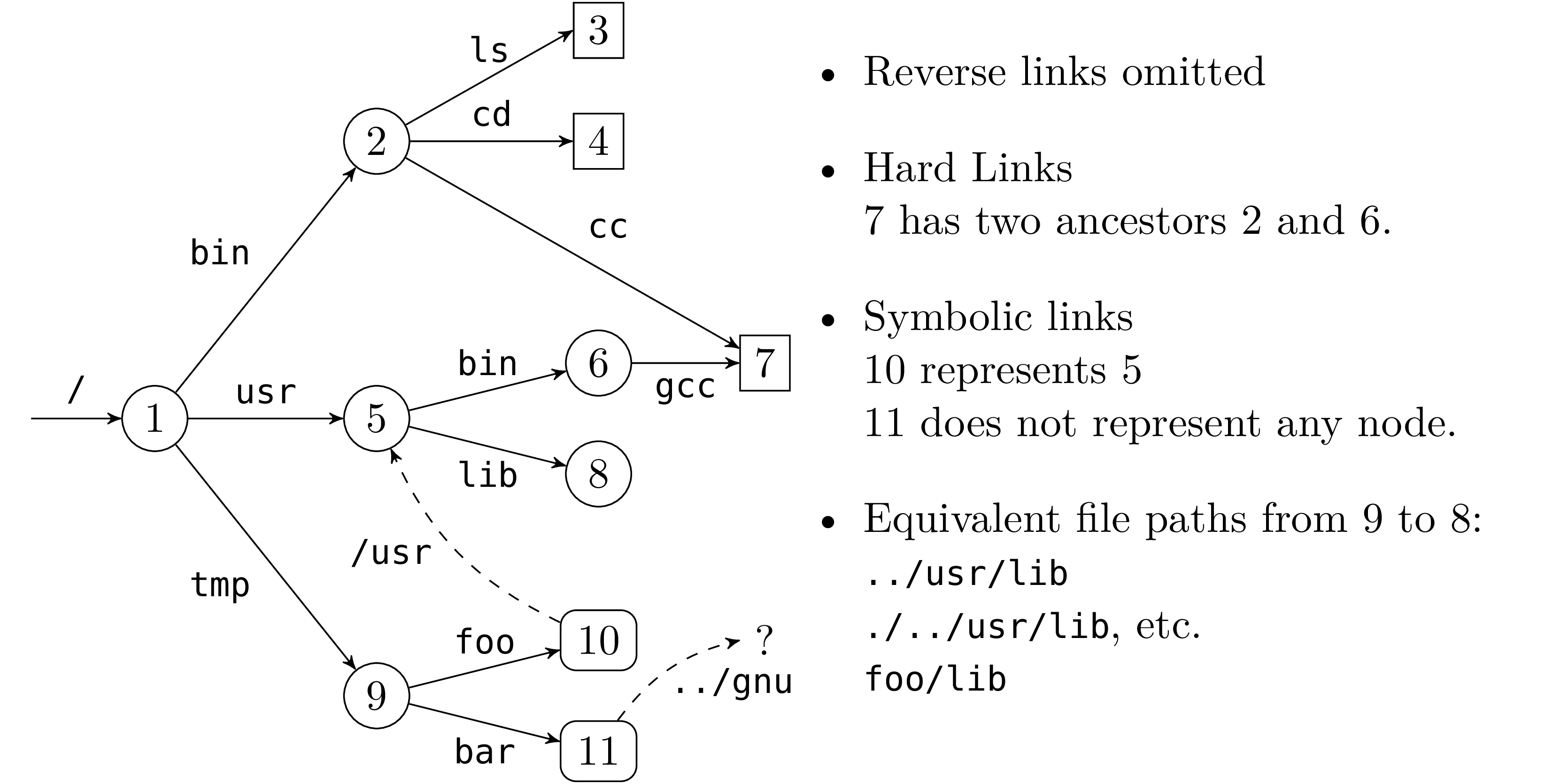

Figure 1 gives an example of a file hierarchy. The

symbolic link 11 corresponding to the path /tmp/bar whose

path value is the relative path ../gnu, does not refer to any

existing file in the hierarchy (at the moment).

In general, a recursive traversal of the hierarchy will terminate

if the following rules are respected:

-

the directories

. and .. are ignored.

- symbolic links are not followed.

But if symbolic links are followed we are traversing a graph and

we need to keep track of the nodes we have already visited to avoid loops.

Each process has a current working directory. It is returned by the

function getcwd and can be changed with

chdir. It is also possible to constrict the

view of the file hierarchy by calling

chroot p. This makes the node p, which

should be a directory, the root of the restricted view of the

hierarchy. Absolute file paths are then

interpreted according to this new root p (and of course .. at the

new root is p itself).

2.2 File names and file descriptors

There are two ways to access a file. The first is by its file

name (or path name) in the file system hierarchy. Due to

hard links, a file can have many different names. Names are values of

type string. For example the system calls unlink,

link, symlink and rename all operate at

the file name level.

val unlink : string -> unit

val link : string -> string -> unit

val symlink : string -> string -> unit

val rename : string -> string -> unit

Their effect is as follows:

-

unlink f erases the file f like the Unix command

rm -f f.

link f1 f2 creates a hard link named f2 to

the file f1 like the command ln f1 f2.

symlink f1 f2 creates a symbolic link named f2 to the file

f1 like the command ln -s f1 f2.

rename f1 f2 renames the file f1 to f2

like the command mv f1 f2.

The second way of accessing a file is by a file descriptor. A

descriptor represents a pointer to a file along with other information

like the current read/write position in the file, the access rights of

the file (is it possible to read? write?) and flags which control the

behavior of reads and writes (blocking or non-blocking, overwrite,

append, etc.). File descriptors are values of the abstract type

file_descr.

Access to a file via its descriptor is independent from the

access via its name. In particular whenever we get a file descriptor,

the file can be destroyed or renamed but the descriptor still points

on the original file.

When a program is executed, three descriptors are allocated and

tied to the variables stdin, stdout and stderr of the

Unix module:

They correspond, respectively, to the standard input, standard output

and standard error of the process.

When a program is executed on the command line without any

redirections, the three descriptors refer to the terminal. But if,

for example, the input has been redirected using the shell expression

cmd < f, then the descriptor stdin refers to the file named f

during the execution of the command cmd. Similarly, cmd > f

and cmd 2> f respectively bind the descriptors stdout and

stderr to the file named f during the execution of the

command.

2.3 Meta-attributes, types and permissions

The system calls stat, lstat and fstat

return the meta-attributes of a file; that is, information about

the node itself rather than its content. Among other things, this

information contains the identity of the file, the type of file, the

access rights, the time and date of last access and other information.

val stat : string -> stats

val lstat : string -> stats

val fstat : file_descr -> stats

The system calls stat and lstat take a file name as an

argument while fstat takes a previously opened descriptor and

returns information about the file it points to. stat and

lstat differ on symbolic links : lstat returns information

about the symbolic link itself, while stat returns information

about the file that the link points to. The result of these three

calls is a record of type stats whose fields are

described in table 1.

| Field name | Description |

|

st_dev : int | The id of the device on which the file is stored. |

st_ino : int | The id of the file (inode number) in its partition.

The pair (st_dev, st_ino) uniquely identifies the file

within the file system. |

st_kind : file_kind | The file type. The type file_kind is an enumerated type

whose constructors are:

S_REG | Regular file |

S_DIR | Directory |

S_CHR | Character device |

S_BLK | Block device |

S_LNK | Symbolic link |

S_FIFO | Named pipe |

S_SOCK | Socket

|

|

st_perm : int | Access rights for the file |

st_nlink : int | For a directory: the number of entries in the directory. For others:

the number of hard links to this file. |

st_uid : int | The id of the file’s user owner. |

st_gid : int | The id of the file’s group owner. |

st_rdev : int | The id of the associated peripheral (for special files). |

st_size : int | The file size, in bytes. |

st_atime : int | Last file content access date (in seconds from

January 1st 1970, midnight, gmt). |

st_mtime : int | Last file content modification date (idem). |

st_ctime : int | Last file state modification date: either a

write to the file or a change in access rights, user or group owner,

or number of links.

|

|

Table 1 — Fields of the stats structure

Identification

A file is uniquely identified by the pair made of its device number

(typically the disk partition where it is located) st_dev and its

inode number st_ino.

Owners

A file has one user owner st_uid and one group owner

st_gid. All the users and groups

on the machine are usually described in the

/etc/passwd and /etc/groups files. We can look up them by

name in a portable manner with the functions getpwnam and

getgrnam or by id with

getpwuid and getgrgid.

The name of the user of a running process and all the groups

to which it belongs can be retrieved with the commands

getlogin and getgroups.

The call chown changes the owner (second argument) and the

group (third argument) of a file (first argument). If we have a file

descriptor, fchown can be used instead. Only the super user

can change this information arbitrarily.

val chown : string -> int -> int -> unit

val fchown : file_descr -> int -> int -> unit

Access rights

Access rights are encoded as bits in an integer, and the type

file_perm is just an abbreviation for the type

int. They specify special bits and read, write and

execution rights for the user owner, the group owner and the other

users as vector of bits:

| Special | User | Group | Other |

| – | – | – | – | – | – | – | – | – | – | – | – |

OoSUGO

|

where in each of the user, group and other fields, the order of bits

indicates read (r), write (w) and execute (x) rights.

The permissions on a file are the union of all these individual

rights, as shown in table 2.

| Bit (octal) | Notation ls -l | Access right |

|

0o100 | --x------ | executable by the user owner |

0o200 | -w------- | writable by the user owner |

0o400 | r-------- | readable by the user owner |

|

0o10 | -----x--- | executable by members of the group owner |

0o20 | ----w---- | writable by members of the group owner |

0o40 | ---r---- | readable by members of the group owner |

|

0o1 | --------x | executable by other users |

0o2 | -------w- | writable by other users |

0o4 | ------r-- | readable by other users |

|

0o1000 | --------t | the bit t on the group (sticky bit) |

0o2000 | -----s--- | the bit s on the group (set-gid) |

0o4000 | --s------ | the bit s on the user (set-uid) |

|

Table 2 — Permission bits

For files, the meaning of read, write and execute permissions is

obvious. For a directory, the execute permission means the right to

enter it (to chdir to it) and read permission the right to list

its contents. Read permission on a directory is however not needed to

read its files or sub-directories (but we then need to know their

names).

The special bits do not have meaning unless the x bit is set (if

present without x set, they do not give additional rights). This

is why their representation is superimposed on the bit x and

the letters S and T are used instead of s and t

whenever x is not set. The bit t allows sub-directories to

inherit the permissions of the parent directory. On a directory,

the bit s allows the use of the directory’s uid or gid rather

than the user’s to create directories. For an executable file,

the bit s allows the changing at execution time of the user’s

effective identity or group with the system calls setuid

and setgid.

The process also preserves its original identities unless

it has super user privileges, in which case setuid and

setgid change both its effective and original user and group

identities. The original identity is preserved to allow

the process to subsequently recover it as its effective identity

without needing further privileges. The system calls getuid and

getgid return the original identities and

geteuid and getegid return the effective identities.

A process also has a file creation mask encoded the same way file

permissions are. As its name suggests, the mask specifies prohibitions

(rights to remove): during file creation a bit set to 1 in the

mask is set to 0 in the permissions of the created file. The mask

can be consulted and changed with the system call umask:

Like many system calls that modify system variables, the modifying

function returns the old value of the variable. Thus, to just look up

the value we need to call the function twice. Once with an arbitrary

value to get the mask and a second time to put it back. For example:

let m = umask 0 in ignore (umask m); m

File access permissions can be modified with the system calls

chmod and fchmod:

val chmod : string -> file_perm -> unit

val fchmod : file_descr -> file_perm -> unit

and they can be tested “dynamically” with the system

call access:

where requested access rights to the file are specified by a list of

values of type access_permission whose meaning is

obvious except for F_OK which just checks for the file’s

existence (without checking for the other rights). The function

raises an error if the access rights are not granted.

Note that the information inferred by access may be more

restrictive than the information returned by lstat because a file

system may be mounted with restricted rights — for example in

read-only mode. In that case access will deny a write permission

on a file whose meta-attributes would allow it. This is why we

distinguish between “dynamic” (what a process can actually do)

and “static” (what the file system specifies) information.

2.4 Operations on directories

Only the kernel can write in directories (when files are

created). Thus opening a directory in write mode is prohibited. In

certain versions of Unix a directory may be opened in read only mode

and read with read, but other versions prohibit

it. However, even if this is possible, it is preferable not to do so

because the format of directory entries vary between Unix versions and

is often complex. The following functions allow reading a directory

sequentially in a portable manner:

The system call opendir returns a directory descriptor for a

directory. readdir reads the next entry of a descriptor, and

returns a file name relative to the directory or raises the exception

End_of_file if the end of the directory is

reached. rewinddir repositions the descriptor at the

beginning of the directory and closedir closes the directory

descriptor.

Example

The following library function, in Misc, iterates a

function f over the entries of the directory dirname.

let iter_dir f dirname =

let d = opendir dirname in

try while true do f (readdir d) done

with End_of_file -> closedir d

* * *

To create a directory or remove an empty directory, we have

mkdir and rmdir:

val mkdir : string -> file_perm -> unit

val rmdir : string -> unit

The second argument of mkdir determines the access rights of the

new directory. Note that we can only remove a directory that is

already empty. To remove a directory and its contents, it is thus

necessary to first recursively empty the contents of the directory and

then remove the directory.

2.5 Complete example: search in a file hierarchy

The Unix command find lists the files of a hierarchy matching

certain criteria (file name, type and permissions etc.). In this

section we develop a library function Findlib.find which

implements these searches and a command find that provides a version

of the Unix command find that supports the options -follow

and -maxdepth.

We specify the following interface for Findlib.find:

val find :

(Unix.error * string * string -> unit) ->

(string -> Unix.stats -> bool) -> bool -> int -> string list ->

unit

The function call

find handler action follow depth roots

traverses the file hierarchy starting from the roots specified in the

list roots (absolute or relative to the current directory of the

process when the call is made) up to a maximum depth depth and following

symbolic links if the flag follow is set. The paths found under

the root r include r as a prefix. Each found path p is

given to the function action along with the data returned by

Unix.lstat p (or Unix.stat p if follow is true).

The function action returns a boolean indicating, for

directories, whether the search should continue for its contents (true)

or not (false).

The handler function reports traversal errors of type

Unix_error. Whenever an error occurs the arguments of the

exception are given to the handler function and the traversal

continues. However when an exception is raised by the functions

action or handler themselves, we immediately stop the

traversal and let it propagate to the caller. To propagate an

Unix_error exception without catching it like a traversal error,

we wrap these exceptions in the Hidden exception (see

hide_exn and reveal_exn).

1 open Unix;;

2

3 exception Hidden

of exn

4 let hide_exn f x =

try f x

with exn -> raise (Hidden exn);;

5 let reveal_exn f x =

try f x

with Hidden exn -> raise exn;;

6

7 let find on_error on_path follow depth roots =

8 let rec find_rec depth visiting filename =

9 try

10 let infos = (

if follow

then stat

else lstat) filename

in

11 let continue = hide_exn (on_path filename) infos

in

12 let id = infos.st_dev, infos.st_ino

in

13 if infos.st_kind = S_DIR && depth > 0 && continue &&

14 (not follow || not (List.mem id visiting))

15 then

16 let process_child child =

17 if (child <> Filename.current_dir_name &&

18 child <> Filename.parent_dir_name)

then

19 let child_name = Filename.concat filename child

in

20 let visiting =

21 if follow

then id :: visiting

else visiting

in

22 find_rec (depth-1) visiting child_name

in

23 Misc.iter_dir process_child filename

24 with Unix_error (e, b, c) -> hide_exn on_error (e, b, c)

in

25 reveal_exn (List.iter (find_rec depth [])) roots;;

A directory is identified by the id pair (line 12)

made of its device and inode number. The list visiting keeps

track of the directories that have already been visited. In fact

this information is only needed if symbolic links are followed

(line 21).

It is now easy to program the find command. The essential part of

the code parses the command line arguments with the Arg

module.

let find () =

let follow = ref false in

let maxdepth = ref max_int in

let roots = ref [] in

let usage_string =

("Usage: " ^ Sys.argv.(0) ^ " [files...] [options...]") in

let opt_list = [

"-maxdepth", Arg.Int ((:=) maxdepth), "max depth search";

"-follow", Arg.Set follow, "follow symbolic links";

] in

Arg.parse opt_list (fun f -> roots := f :: !roots) usage_string;

let action p infos = print_endline p; true in

let errors = ref false in

let on_error (e, b, c) =

errors := true; prerr_endline (c ^ ": " ^ Unix.error_message e) in

Findlib.find on_error action !follow !maxdepth

(if !roots = [] then [ Filename.current_dir_name ]

else List.rev !roots);

if !errors then exit 1;;

Unix.handle_unix_error find ();;

Although our find command is quite limited, the library

function FindLib.find is far more general, as the following

exercise shows.

Exercise 1

Use the function FindLib.find to write a command

find_but_CVS equivalent to the Unix command:

find . -type d -name CVS -prune -o -print

which, starting from the current directory, recursively prints

files without printing or entering directories whose name is CVS.

Answer.

* * *

let main () =

let action p infos =

let b = not (infos.st_kind = S_DIR || Filename.basename p = "CVS") in

if b then print_endline p; b in

let errors = ref false in

let error (e,c,b) =

errors:= true; prerr_endline (b ^ ": " ^ error_message e) in

Findlib.find error action false max_int [ "." ];;

handle_unix_error main ()

* * *

Exercise 2

The function getcwd is not a system call but is defined in the

Unix module. Give a “primitive” implementation of

getcwd. First describe the principle of your algorithm with words

and then implement it (you should avoid repeating the same system

call).

Answer.

* * *

Here are some hints. We move up from the current position towards the

root and construct backwards the path we are looking for. The root can

be detected as the only directory node whose parent is equal to itself

(relative to the root . and .. are equal). To find the name

of a directory r we need to list the contents of its parent

directory and detect the file that corresponds to r.

* * *

2.6 Opening a file

The openfile function allows us to obtain a descriptor for

a file of a given name (the corresponding system call

is open, however open is a keyword in OCaml).

val openfile :

string -> open_flag list -> file_perm -> file_descr

The first argument is the name of the file to open. The second

argument, a list of flags from the enumerated type

open_flag, describes the mode in which the file should

be opened and what to do if it does not exist. The third argument of

type file_perm defines the file’s access rights,

should the file be created. The result is a file descriptor for the

given file name with the read/write position set to the beginning of the

file.

The flag list must contain exactly one of the following flags:

O_RDONLY | Open in read-only mode. |

O_WRONLY | Open in write-only mode. |

O_RDWR | Open in read and write mode.

|

These flags determine whether read or write calls can be done on the

descriptor. The call openfile fails if a process requests an open

in write (resp. read) mode on a file on which it has no right to

write (resp. read). For this reason O_RDWR should not be used

systematically.

The flag list can also contain one or more of the following values:

O_APPEND | Open in append mode. |

O_CREAT | Create the file if it does not exist. |

O_TRUNC | Truncate the file to zero if it already exists. |

O_EXCL | Fail if the file already exists.

|

O_NONBLOCK | Open in non-blocking mode. |

O_NOCTTY | Do not function in console mode.

|

O_SYNC | Perform the writes in synchronous mode. |

O_DSYNC | Perform the data writes in synchronous mode. |

O_RSYN | Perform the reads in synchronous mode.

|

The first group defines the behavior to follow if

the file exists or not. With:

-

O_APPEND, the read/write position will be set at the end of

the file before each write. Consequently any written data will be

added at the end of file. Without O_APPEND, writes occur at the

current read/write position (initially, the beginning of the file). O_TRUNC, the file is truncated when it

is opened. The length of the file is set to zero and the bytes

contained in the file are lost, and writes start from an empty file.

Without O_TRUNC, the writes are made at the start of the file

overwriting any data that may already be there.O_CREAT, creates the file if it does not exist. The created

file is empty and its access rights are specified by the third argument

and the creation mask of the process (the mask can be retrieved

and changed with umask).O_EXCL, openfile fails if the file already exists.

This flag, used in conjunction with O_CREAT allows to use

files as locks1. A process

which wants to take the lock calls openfile on the file with

O_EXCL and O_CREAT. If the file already exists, this means

that another process already holds the lock and openfile raises

an error. If the file does not exist openfile returns without

error and the file is created, preventing other processes from

taking the lock. To release the lock the process calls

unlink on it. The creation of a file is an atomic operation: if

two processes try to create the same file in parallel with the

options O_EXCL and O_CREAT, at most one of them can

succeed. The drawbacks of this technique is that a process must

busy wait to acquire a lock that is currently held and

the abnormal termination of a process holding a lock may never

release it.

Example

Most programs use 0o666 for the third argument

to openfile. This means rw-rw-rw- in symbolic notation.

With the default creation mask of 0o022, the

file is thus created with the permissions rw-r--r--. With a more

lenient mask of 0o002, the file is created with the permissions

rw-rw-r--.

* * *

Example

To read from a file:

openfile filename [O_RDONLY] 0

The third argument can be anything as O_CREAT is not specified, 0

is usually given.

To write to an empty a file without caring about any previous content:

openfile filename [O_WRONLY; O_TRUNC; O_CREAT] 0o666

If the file will contain executable code (e.g. files

created by ld, scripts, etc.), we create it with execution permissions:

openfile filename [O_WRONLY; O_TRUNC; O_CREAT] 0o777

If the file must be confidential (e.g. “mailbox” files where

mail stores read messages), we create it with write permissions

only for the user owner:

openfile filename [O_WRONLY; O_TRUNC; O_CREAT] 0o600

To append data at the end of an existing file or create it if it

doesn’t exist:

openfile filename [O_WRONLY; O_APPEND; O_CREAT] 0o666

* * *

The O_NONBLOCK flag guarantees that if the file is a named pipe

or a special file then the file opening and subsequent reads and

writes will be non-blocking.

The O_NOCTYY flag guarantees that if the file is a control

terminal (keyboard, window, etc.), it won’t become the controlling

terminal of the calling process.

The last group of flags specifies how to synchronize

read and write operations. By default these operations are not

synchronized. With:

-

O_DSYNC, the data is written synchronously such that

the process is blocked until all the writes have been done

physically on the media (usually a disk).

O_SYNC, the file data and its meta-attributes are written

synchronously.

O_RSYNC, with O_DSYNC specifies that the data reads are

also synchronized: it is guaranteed that all current writes

(requested but not necessarily performed) to the file are really

written to the media before the next read. If O_RSYNC is

provided with O_SYNC the above also applies to meta-attributes

changes.

2.7 Reading and writing

The system calls read and write read and write

bytes in a file. For historical reasons, the system

call write is provided in OCaml under the name

single_write:

val read : file_descr -> string -> int -> int -> int

val single_write : file_descr -> string -> int -> int -> int

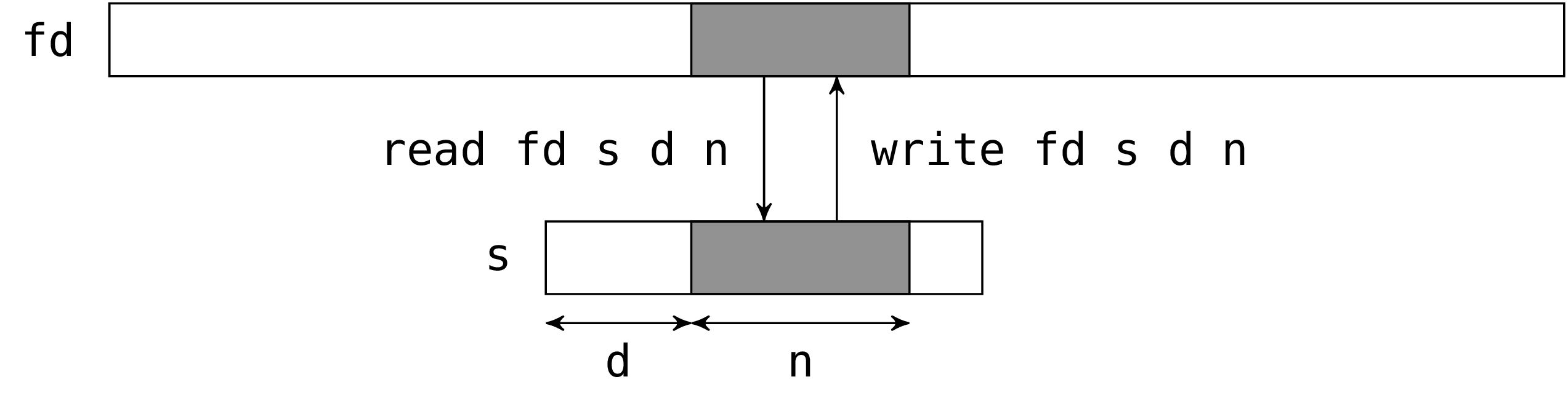

The two calls read and single_write have the same

interface. The first argument is the file descriptor to act on. The

second argument is a string which will hold the read bytes (for

read) or the bytes to write (for single_write). The third

argument is the position in the string of the first byte to be written

or read. The fourth argument is the number of the bytes to be read or

written. In fact the third and fourth argument define a sub-string of

the second argument (the sub-string should be valid, read and

single_write do not check this).

read and single_write return the number of bytes actually

read or written.

Reads and write calls are performed from the file descriptor’s current

read/write position (if the file was opened in O_APPEND mode,

this position is set at the end of the file prior to any

write). After the system call, the current position is advanced by

the number of bytes read or written.

For writes, the number of bytes actually written is usually the number

of bytes requested. However there are exceptions: (i) if it is not

possible to write the bytes (e.g. if the disk is full) (ii) the

descriptor is a pipe or a socket open in non-blocking mode (iii) due to

OCaml, if the write is too large.

The reason for (iii) is that internally OCaml uses auxiliary

buffers whose size is bounded by a maximal value. If this value is

exceeded the write will be partial. To work around this problem

OCaml also provides the function write which

iterates the writes until all the data is written or an error occurs.

The problem is that in case of error there’s no way to know the number

of bytes that were actually written. Hence single_write should be

preferred because it preserves the atomicity of writes (we know

exactly what was written) and it is more faithful to the original Unix

system call (note that the implementation of single_write is

described in section 5.7).

Example

Assume fd is a descriptor open in write-only mode.

write fd "Hello world!" 3 7

writes the characters "lo worl" in the corresponding file,

and returns 7.

* * *

For reads, it is possible that the number bytes actually read is

smaller than the number of requested bytes. For example when the end

of file is near, that is when the number of bytes between the current

position and the end of file is less than the number of requested

bytes. In particular, when the current position is at the end of file,

read returns zero. The convention “zero equals end of

file” also holds for special files, pipes and sockets. For example,

read on a terminal returns zero if we issue a ctrl-D on the

input.

Another example is when we read from a terminal. In that case,

read blocks until an entire line is available. If the line length

is smaller than the requested bytes read returns immediately with

the line without waiting for more data to reach the number of

requested bytes. (This is the default behavior for terminals, but it

can be changed to read character-by-character instead of

line-by-line, see section 2.13 and the type

terminal_io for more details.)

Example

The following expression reads at most 100 characters from standard

input and returns them as a string.

let buffer = String.create 100 in

let n = read stdin buffer 0 100 in

String.sub buffer 0 n

* * *

Example

The function really_read below has the same interface as

read, but makes additional read attempts to try to get

the number of requested bytes. It raises the exception

End_of_file if the end of file is reached while doing this.

let rec really_read fd buffer start length =

if length <= 0 then () else

match read fd buffer start length with

| 0 -> raise End_of_file

| r -> really_read fd buffer (start + r) (length - r);;

* * *

2.8 Closing a descriptor

The system call close closes a file descriptor.

val close : file_descr -> unit

Once a descriptor is closed, all attempts to read, write, or do

anything else with the descriptor will fail. Descriptors should be

closed when they are no longer needed; but it is not mandatory. In

particular, and in contrast to Pervasives’

channels, a file descriptor doesn’t need to be closed to ensure that

all pending writes have been performed as write requests made with

write are immediately transmitted to the kernel. On the other

hand, the number of descriptors allocated by a process is limited by

the kernel (from several hundreds to thousands). Doing a close on

an unused descriptor releases it, so that the process does not run out

of descriptors.

2.9 Complete example: file copy

We program a command file_copy which, given two arguments

f1 and f2, copies to the file f2 the bytes contained

in f1.

open Unix;;

let buffer_size = 8192;;

let buffer = String.create buffer_size;;

let file_copy input_name output_name =

let fd_in = openfile input_name [O_RDONLY] 0 in

let fd_out = openfile output_name [O_WRONLY; O_CREAT; O_TRUNC] 0o666 in

let rec copy_loop () = match read fd_in buffer 0 buffer_size with

| 0 -> ()

| r -> ignore (write fd_out buffer 0 r); copy_loop ()

in

copy_loop ();

close fd_in;

close fd_out;;

let copy () =

if Array.length Sys.argv = 3 then begin

file_copy Sys.argv.(1) Sys.argv.(2);

exit 0

end else begin

prerr_endline

("Usage: " ^ Sys.argv.(0) ^ " <input_file> <output_file>");

exit 1

end;;

handle_unix_error copy ();;

The bulk of the work is performed by the the function file_copy.

First we open a descriptor in read-only mode on the input file and

another in write-only mode on the output file.

If the output file already exists, it is truncated (option

O_TRUNC) and if it does not exist it is created (option

O_CREAT) with the permissions rw-rw-rw- modified by the creation

mask. (This is unsatisfactory: if we copy an executable file, we would

like the copy to be also executable. We will see later how to give

a copy the same permissions as the original.)

In the copy_loop function we do the copy by blocks of

buffer_size bytes. We request buffer_size bytes to read. If

read returns zero, we have reached the end of file and the copy

is over. Otherwise we write the r bytes we have read in the

output file and start again.

Finally, we close the two descriptors. The main program copy

verifies that the command received two arguments and passes them to

the function file_copy.

Any error occurring during the copy results in a Unix_error

caught and displayed by handle_unix_error. Example of errors

include inability to open the input file because it does not

exist, failure to read because of restricted permissions, failure to

write because the disk is full, etc.

Exercise 3

Add an option -a to the program, such that

file_copy -a f1 f2 appends the contents of f1 to the end of

the file f2.

Answer.

* * *

If the option -a is supplied, we need to do

openfile output_name [O_WRONLY; O_CREAT; O_APPEND] 0o666

instead of

openfile output_name [O_WRONLY; O_CREAT; O_TRUNC] 0o666

Parsing the new option from the command line is left to the reader.

* * *

2.10 The cost of system calls and buffers

In the example file_copy, reads were made in blocks of 8192

bytes. Why not read byte per by byte, or megabyte per by megabyte?

The reason is efficiency.

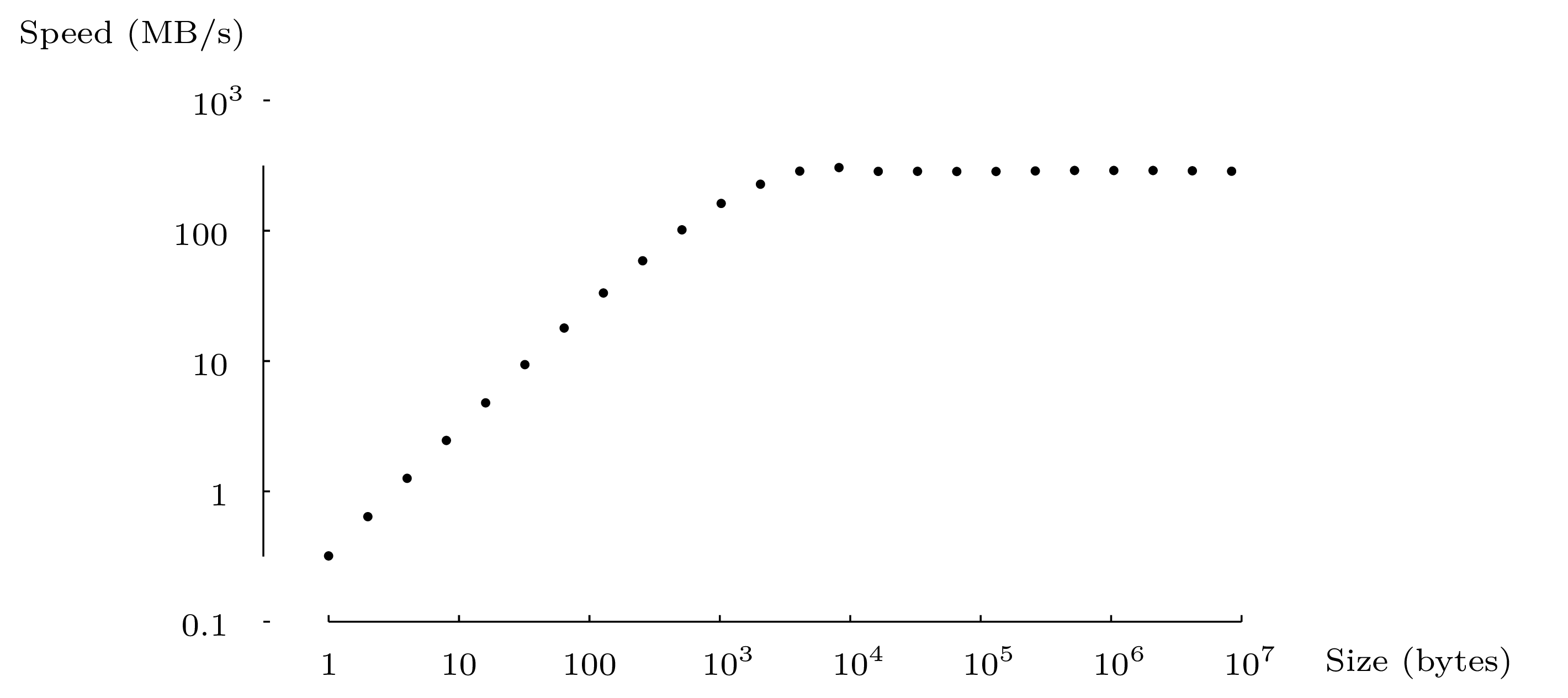

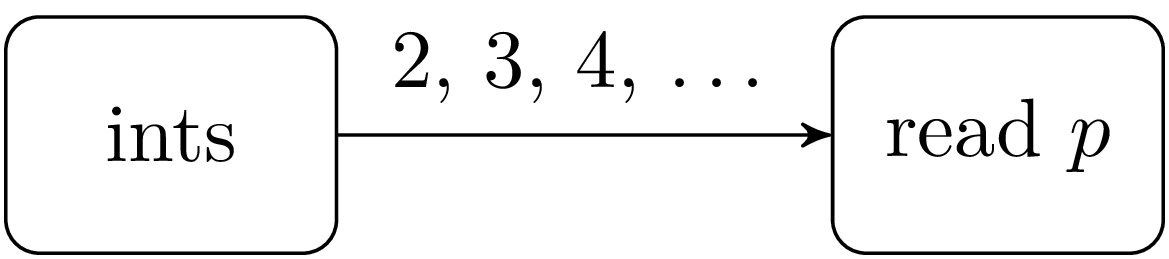

Figure 2 shows the copy speed of file_copy, in

bytes per second, against the size of blocks (the value

buffer_size). The amount of data transferred is the same

regardless of the size of the blocks.

For small block sizes, the copy speed is almost proportional to the

block size. Most of the time is spent not in data transfers but in the

execution of the loop copy_loop and in the calls to read and

write. By profiling more carefully we can see that most of the

time is spent in the calls to read and write. We conclude

that a system call, even if it has not much to do, takes a minimum of

about 4 micro-seconds (on the machine that was used for the test — a

2.8 GHz Pentium 4 ), let us say from 1 to 10 microseconds. For small

input/output blocks, the duration of the system call dominates.

For larger blocks, between 4KB and 1MB, the copy speed is constant and

maximal. Here, the time spent in system calls and the loop is small

relative to the time spent on the data transfer. Also, the buffer

size becomes bigger than the cache sizes used by the system and the

time spent by the system to make the transfer dominates the cost of a

system call2.

Finally, for very large blocks (8MB and more) the speed is slightly

under the maximum. Coming into play here is the time needed to

allocate the block and assign memory pages to it as it fills up.

The moral of the story is that, a system call, even if it does very little work,

costs dearly — much more than a normal function call: roughly, 2 to

20 microseconds for each system call, depending on the

architecture. It is therefore important to minimize the number of

system calls. In particular, read and write operations should be made

in blocks of reasonable size and not character by character.

In examples like file_copy, it is not difficult to do

input/output with large blocks. But other types of programs are more

naturally written with character by character input or output (e.g.

reading a line from a file, lexical analysis, displaying a number etc.).

To satisfy the needs of these programs, most systems provide

input/output libraries with an additional layer of software between

the application and the operating system. For example, in OCaml the

Pervasives module defines the abstract types

in_channel and

out_channel, similar to file descriptors, and

functions on these types like input_char,

input_line,

output_char, or

output_string. This layer uses buffers to

group sequences of character by character reads or writes into a

single system call to read or write. This results in better

performance for programs that proceed character by character.

Moreover this additional layer makes programs more portable: we just

need to implement this layer with the system calls provided by another

operating system to port all the programs that use this library on

this new platform.

2.11 Complete example: a small input/output library

To illustrate the buffered input/output techniques, we implement a fragment

of OCaml Pervasives library. Here is the interface:

exception End_of_file

type in_channel

val open_in : string -> in_channel

val input_char : in_channel -> char

val close_in : in_channel -> unit

type out_channel

val open_out : string -> out_channel

val output_char : out_channel -> char -> unit

val close_out : out_channel -> unit

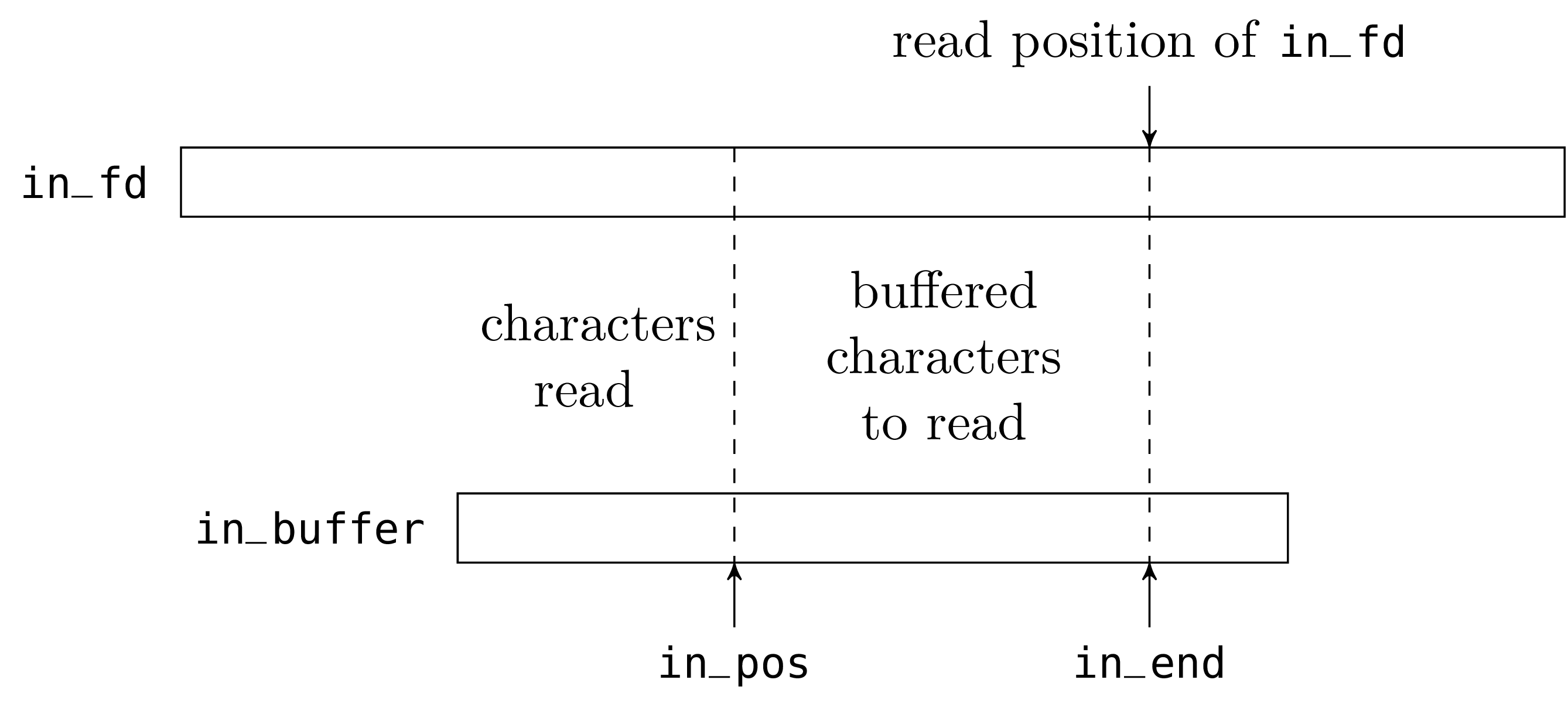

We start with the “input” part. The abstract type

in_channel is defined as follows:

open Unix;;

type in_channel =

{ in_buffer: string;

in_fd: file_descr;

mutable in_pos: int;

mutable in_end: int };;

exception End_of_file

The character string of the in_buffer field is, literally, the

buffer. The field in_fd is a (Unix) file descriptor, opened on

the file to read. The field in_pos is the current read position

in the buffer. The field in_end is the number of valid

characters preloaded in the buffer.

The fields in_pos and in_end will be modified in place during

read operations; we therefore declare them as mutable.

let buffer_size = 8192;;

let open_in filename =

{ in_buffer = String.create buffer_size;

in_fd = openfile filename [O_RDONLY] 0;

in_pos = 0;

in_end = 0 };;

When we open a file for reading, we create a buffer of reasonable size

(large enough so as not to make too many system calls; small enough so

as not to waste memory). We then initialize the field in_fd with

a Unix file descriptor opened in read-only mode on the given file. The

buffer is initially empty (it does not contain any character from the

file); the field in_end is therefore initialized to zero.

let input_char chan =

if chan.in_pos < chan.in_end then begin

let c = chan.in_buffer.[chan.in_pos] in

chan.in_pos <- chan.in_pos + 1;

c

end else begin

match read chan.in_fd chan.in_buffer 0 buffer_size

with 0 -> raise End_of_file

| r -> chan.in_end <- r;

chan.in_pos <- 1;

chan.in_buffer.[0]

end;;

To read a character from an in_channel, we do one of two

things. Either there is at least one unread character in the buffer;

that is to say, the field in_pos is less than the field

in_end. We then return this character located at in_pos, and

increment in_pos. Or the buffer is empty and we call read to

refill the buffer. If read returns zero, we have reached the end

of the file and we raise the exception End_of_file. Otherwise, we

put the number of characters read in the field in_end (we may

receive less characters than we requested, thus the buffer may be

only partially refilled) and we return the first character read.

let close_in chan =

close chan.in_fd;;

Closing an in_channel just closes the underlying Unix file descriptor.

The “output” part is very similar to the “input”

part. The only asymmetry is that the buffer now contains incomplete

writes (characters that have already been buffered but not written to

the file descriptor), and not reads in advance (characters that have

buffered, but not yet read).

type out_channel =

{ out_buffer: string;

out_fd: file_descr;

mutable out_pos: int };;

let open_out filename =

{ out_buffer = String.create 8192;

out_fd = openfile filename [O_WRONLY; O_TRUNC; O_CREAT] 0o666;

out_pos = 0 };;

let output_char chan c =

if chan.out_pos < String.length chan.out_buffer then begin

chan.out_buffer.[chan.out_pos] <- c;

chan.out_pos <- chan.out_pos + 1

end else begin

ignore (write chan.out_fd chan.out_buffer 0 chan.out_pos);

chan.out_buffer.[0] <- c;

chan.out_pos <- 1

end;;

let close_out chan =

ignore (write chan.out_fd chan.out_buffer 0 chan.out_pos);

close chan.out_fd;;

To write a character on an out_channel, we do one of two things.

Either the buffer is not full and we just store the character in the

buffer at the position out_pos and increment that value. Or the

buffer is full and we empty it with a call to write and then

store the character at the beginning of the buffer.

When we close an out_channel, we must not forget to write the

buffer contents (the characters from 0 to out_pos - 1) to the

file otherwise the writes made on the channel since the last time

the buffer was emptied would be lost.

Exercise 4

Implement the function:

val output_string : out_channel -> string -> unit

which behaves like a sequence of output_char on each

character of the string, but is more efficient.

Answer.

* * *

The idea is to copy the string to output into the buffer. We need to

take into account the case where there is not enough space in the

buffer (in that case the buffer needs to emptied), and also the case

where the string is longer than the buffer (in that case it can

be written directly). Here is a possible solution.

let output_string chan s =

let avail = String.length chan.out_buffer - chan.out_pos in

if String.length s <= avail then begin

String.blit s 0 chan.out_buffer chan.out_pos (String.length s);

chan.out_pos <- chan.out_pos + String.length s

end

else if chan.out_pos = 0 then begin

ignore (write chan.out_fd s 0 (String.length s))

end

else begin

String.blit s 0 chan.out_buffer chan.out_pos avail;

let out_buffer_size = String.length chan.out_buffer in

ignore (write chan.out_fd chan.out_buffer 0 out_buffer_size);

let remaining = String.length s - avail in

if remaining < out_buffer_size then begin

String.blit s avail chan.out_buffer 0 remaining;

chan.out_pos <- remaining

end else begin

ignore (write chan.out_fd s avail remaining);

chan.out_pos <- 0

end

end;;

* * *

2.12 Positioning

The system call lseek allows to set the current read/write

position of a file descriptor.

val lseek : file_descr -> int -> seek_command -> int

The first argument is the file descriptor and the second one the

desired position. The latter is interpreted according to the value

of the third argument of type seek_command. This

enumerated type specifies the kind of position:

SEEK_SET | Absolute position. The second argument specifies

the character number to point to. The first character of a file is at

position zero. |

SEEK_CUR | Position relative to the current position.

The second argument is an offset relative to the

current position. A positive value moves forward and a negative value

moves backwards. |

SEEK_END | Position relative to the end of file. The

second argument is an offset relative to the end of file.

As for SEEK_CUR, the offset may be positive or negative.

|

The value returned by lseek is the resulting absolute

read/write position.

An error is raised if a negative absolute position is

requested. The requested position can be located after the end

of file. In that case, a read returns zero (end of

file reached) and a write extends the file with zeros until

that position and then writes the supplied data.

Example

To position the cursor on the 1000th character of a file:

lseek fd 1000 SEEK_SET

To rewind by one character:

lseek fd (-1) SEEK_CUR

To find out the size of a file:

let file_size = lseek fd 0 SEEK_END in ...

* * *

For descriptors opened in O_APPEND mode, the read/write position

is automatically set at the end of the file before each write. Thus

a call lseek is useless to set the write position, it may however

be useful to set the read position.

The behavior of lseek is undefined on certain type of files for

which absolute access is meaningless: communication devices (pipes,

sockets) but also many special files like the terminal.

In most Unix implementations a call to lseek on these files is

simply ignored: the read/write position is set but read/write

operations ignore it. In some implementations, lseek on a pipe or

a socket triggers an error.

Exercise 5

The command tail displays the last n lines of a file.

How can it be implemented efficiently on regular files? What can we

do for the other kind of files? How can the option -f be

implemented (cf. man tail)?

Answer.

* * *

A naive implementation of tail is to read the file sequentially

from the beginning, keeping the last n lines read in a circular

buffer. When we reach the end of file, we display the buffer.

When the data comes from a pipe or a special file which

does not implement lseek, there is no better way.

However if the data is coming from a normal file, it is better to read

the file from the end. With lseek, we read the last 4096

characters. We scan them for the end of lines. If there are at least

n of them, we output and display the corresponding lines.

Otherwise, we start again by adding the next preceding 4096

characters, etc.

To add the option -f, we first proceed as above and then we go

back at the end of the file and try to read from there. If

read returns data we display it immediately and start again. If it

returns 0 we wait some time (sleep 1) and try again.

* * *

2.13 Operations specific to certain file types

In Unix, data communication is done via file descriptors representing

either permanent files (files, peripherals) or volatile ones (pipes

and sockets, see chapters 5 and 6). File

descriptors provide a uniform and media-independent interface for data

communication. Of course the actual implementation of the operations

on a file descriptor depends on the underlying media.

However this uniformity breaks when we need to access all the

features provided by a given media. General operations (opening,

writing, reading, etc.) remain uniform on most descriptors but even,

on certain special files, these may have an ad hoc behavior defined

by the kind of peripheral and its parameters. There are also

operations that work only with certain kind of media.

Normal files

We can shorten a normal file with the system calls

truncate and ftruncate.

The first argument is the file to truncate and the second the desired

size. All the data after this position is lost.

Symbolic links

Most operations on files “follow” symbolic links in the sense

that they do not apply to the link itself but to the file on which the

link points (for example openfile,

stat, truncate, opendir, etc.).

The two system calls symlink and readlink operate

specifically on symbolic links:

The call symlink f1 f2 creates the file f2 as a symbolic

link to f1 (like the Unix command ln -s f1 f2). The call

readlink returns the content of a symbolic link, i.e. the name of

the file to which the link points.

Special files

Special files can be of “character” or “block” type.

The former are character streams: we can read or write characters only

sequentially. These are the terminals, sound devices, printers, etc.

The latter, typically disks, have a permanent medium: characters can

be read by blocks and even seeked relative to the current position.

Among the special files, we may distinguish:

/dev/null | This is the black hole which swallows

everything we put into and from which nothing comes out. This is

extremely useful for ignoring the results of a process: we redirect

its output to /dev/null (see chapter 5). |

/dev/tty* | These are the control terminals. |

/dev/pty* | These are the pseudo-terminals: they are not real

terminals but simulate them (they provide the same interface). |

/dev/hd* | These are the disks. |

/proc | Under Linux, system parameters organized as a

file system. They allow reads and writes.

|

The usual file system calls on special files can behave differently.

However, most special files (terminals, tape drives, disks, etc.)

respond to read and write in the obvious manner (but

sometimes with restrictions on the number of bytes written or read),

but many ignore lseek.

In addition to the usual file system calls, special files which

represent peripherals must be commanded and/or configured

dynamically. For example, for a tape drive, rewind or fast forward the

tape; for a terminal, choice of the line editing mode, behavior of

special characters, serial connection parameters (speed, parity,

etc.). These operations are made in Unix with the system call

ioctl which group together all the particular

cases. However, this system call is not provided by OCaml; it is

ill-defined and cannot be treated in a uniform way.

Terminals

Terminals and pseudo-terminals are special files of type character

which can be configured from OCaml. The system call

tcgetattr takes a file descriptor open on a special file

and returns a structure of type terminal_io which

describes the status of the terminal according to the posix

standard.

type terminal_io =

{ c_ignbrk : bool; c_brk_int : bool; ...; c_vstop : char }

This structure can be modified and given to the function

tcsetattr to change the attributes of the peripheral.

val tcsetattr : file_descr -> setattr_when -> terminal_io -> unit

The first argument is the file descriptor of the peripheral. The last

argument is a structure of type terminal_io describing the

parameters of the peripheral as we want them. The second argument is a

value of the enumerated type setattr_when that

indicates when the change must be done: immediately (TCSANOW),

after having transmitted all written data (TCSADRAIN) or after

having read all the received data (TCAFLUSH). TCSADRAIN is

recommended for changing write parameters and TCSAFLUSH for read

parameters.

Example

When a password is read, characters entered by the user should not be

echoed if the standard input is connected to a terminal or a

pseudo-terminal.

let read_passwd message =

match

try

let default = tcgetattr stdin in

let silent =

{ default with

c_echo = false;

c_echoe = false;

c_echok = false;

c_echonl = false;

} in

Some (default, silent)

with _ -> None

with

| None -> input_line Pervasives.stdin

| Some (default, silent) ->

print_string message;

flush Pervasives.stdout;

tcsetattr stdin TCSANOW silent;

try

let s = input_line Pervasives.stdin in

tcsetattr stdin TCSANOW default; s

with x ->

tcsetattr stdin TCSANOW default; raise x;;

The read_passwd function starts by getting the current settings

of the terminal connected to stdin. Then it defines a modified

version of these in which characters are not echoed. If this fails the

standard input is not a control terminal and we just read a

line. Otherwise we display a message, change the terminal settings, read the

password and put the terminal back in its initial state. Care must be

taken to set the terminal back to its initial state even after a read

failure.

* * *

Sometimes a program needs to start another and connect its standard input

to a terminal (or pseudo-terminal). OCaml does not provide any

support for this3. To achieve that, we must manually look among the

pseudo-terminals (in general, they are files with names in the form of

/dev/tty[a-z][a-f0-9]) and find one that is not already open. We

can then open this file and start the program with this file on its

standard input.

Four other functions control the stream of data of a terminal

(flush waiting data, wait for the end of transmission and restart

communication).

The function tcsendbreak sends an interrupt to the

peripheral. The second argument is the duration of the interrupt

(0 is interpreted as the default value for the

peripheral).

The function tcdrain waits for all written data to

be transmitted.

val tcflush : file_descr -> flush_queue -> unit

Depending on the value of the second argument, a call to the

function tcflush discards the data written but not yet

transmitted (TCIFLUSH), or the data received but not yet read

(TCOFLUSH) or both (TCIOFLUSH).

val tcflow : file_descr -> flow_action -> unit

Depending on the value of the second argument, a call to the

function tcflow suspends the data transmission

(TCOOFF), restarts the transmission (TCOON), sends a control

character stop or start to request the

transmission to be suspended (TCIOFF) or restarted (TCION).

The function setsid puts the process in a new

session and detaches it from the terminal.

2.14 Locks on files

Two processes can modify the same file in parallel; however, their

writes may collide and result in inconsistent data. In some cases data

is always written at the end and opening the file with O_APPEND

prevents this. This is fine for log files but it does not

work for files that store, for example, a database because writes are

performed at arbitrary positions. In that case processes using the

file must collaborate in order not to step on each others toes. A

lock on the whole file can be implemented with an auxiliary file (see

page ??) but the system call lockf allows

for finer synchronization patterns by locking only parts of a file.

val lockf : file_descr -> lock_command -> int -> unit

2.15 Complete example: recursive copy of files

We extend the function file_copy (section 2.9) to

support symbolic links and directories in addition to normal files.

For directories, we recursively copy their contents.

To copy normal files we reuse the function file_copy we already

defined.

open Unix

...

let file_copy input_name output_name =

...

The function set_infos below modifies the owner, the

access rights and the last dates of access/modification

of a file. We use it to preserve this information for copied files.

let set_infos filename infos =

utimes filename infos.st_atime infos.st_mtime;

chmod filename infos.st_perm;

try

chown filename infos.st_uid infos.st_gid

with Unix_error(EPERM,_,_) -> ()

The system call utime modifies the dates of access and

modification. We use chmod and chown to re-establish

the access rights and the owner. For normal users, there are

a certain number of cases where chown will fail with a

“permission denied” error. We catch this error and ignore it.

Here’s the main recursive function.

let rec copy_rec source dest =

let infos = lstat source in

match infos.st_kind with

| S_REG ->

file_copy source dest;

set_infos dest infos

| S_LNK ->

let link = readlink source in

symlink link dest

| S_DIR ->

mkdir dest 0o200;

Misc.iter_dir

(fun file ->

if file <> Filename.current_dir_name

&& file <> Filename.parent_dir_name

then

copy_rec

(Filename.concat source file)

(Filename.concat dest file))

source;

set_infos dest infos

| _ ->

prerr_endline ("Can't cope with special file " ^ source)

We begin by reading the information of the source file. If it is

a normal file, we copy its contents with file_copy and its

information with set_infos. If it is a symbolic link, we read

where it points to and create a link pointing to the same object. If

it is a directory, we create a destination directory, then we read the

directory’s entries (ignoring the entries about the directory itself

or its parent) and recursively call copy_rec for each entry. All

other file types are ignored, with a warning.

The main program is straightforward:

let copyrec () =

if Array.length Sys.argv <> 3 then begin

prerr_endline ("Usage: " ^Sys.argv.(0)^ " <source> <destination>");

exit 2

end else begin

copy_rec Sys.argv.(1) Sys.argv.(2);

exit 0

end

;;

handle_unix_error copyrec ();;

Exercise 6

Copy hard links cleverly. As written above copy_rec creates n

duplicates of the same file whenever a file occurs under n different

names in the hierarchy to copy. Try to detect this situation, copy

the file only once and make hard links in the destination hierarchy.

Answer.

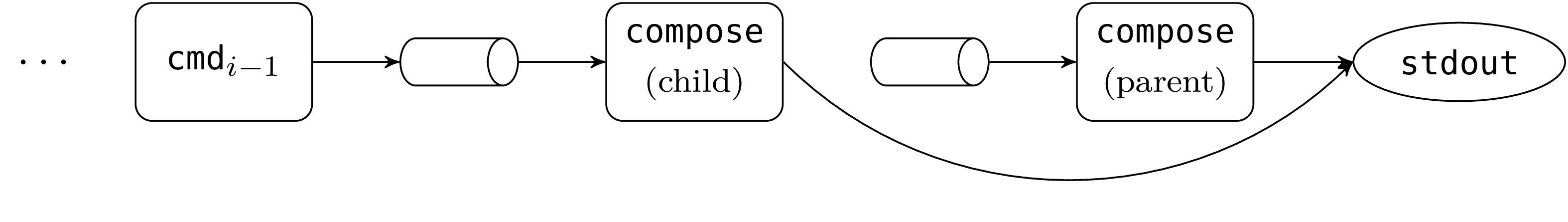

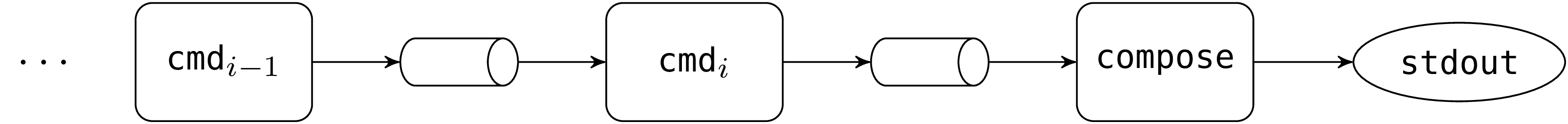

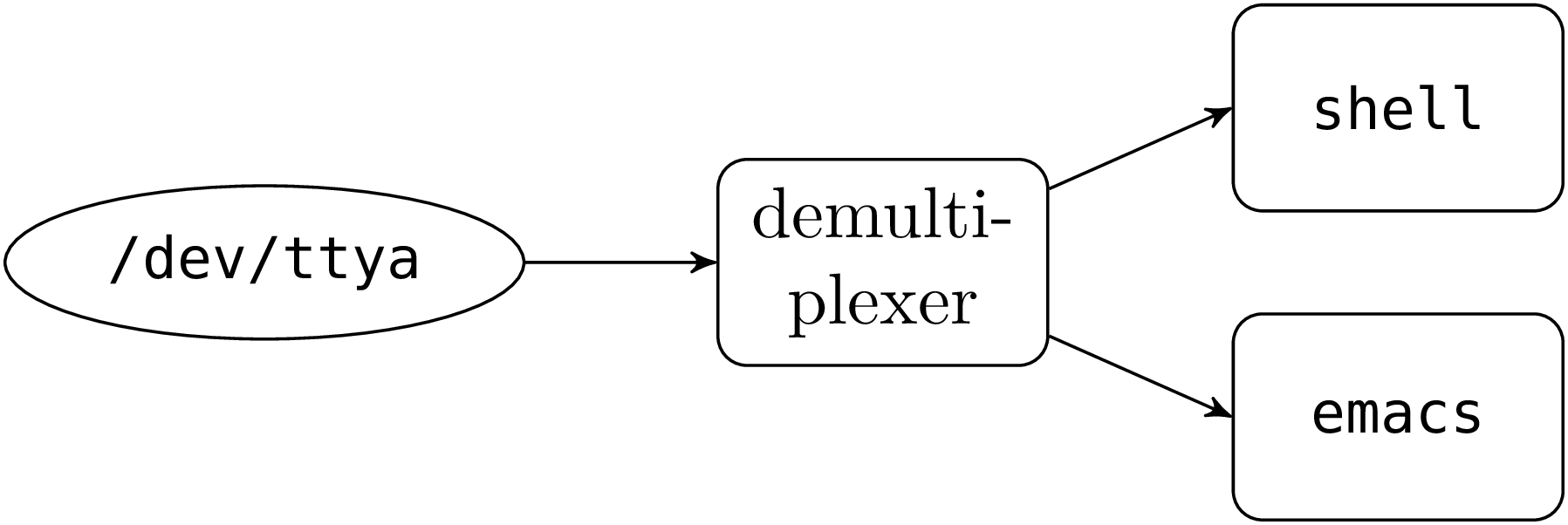

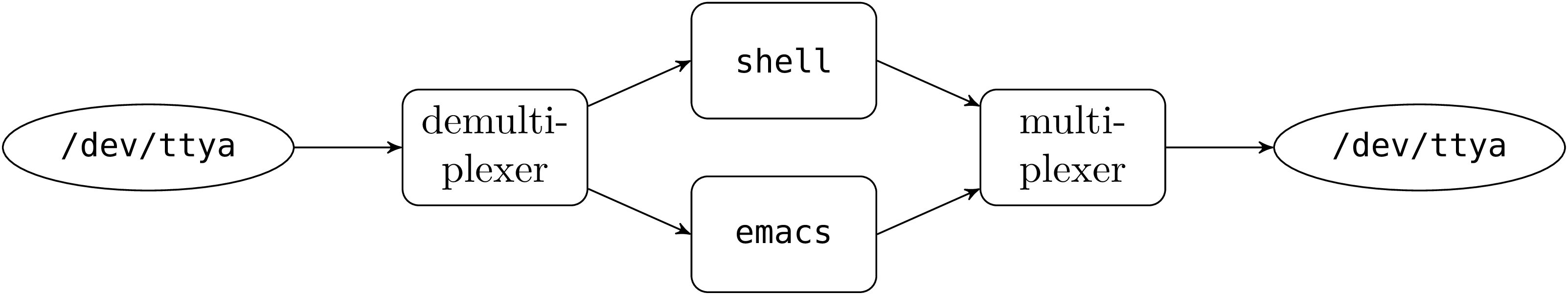

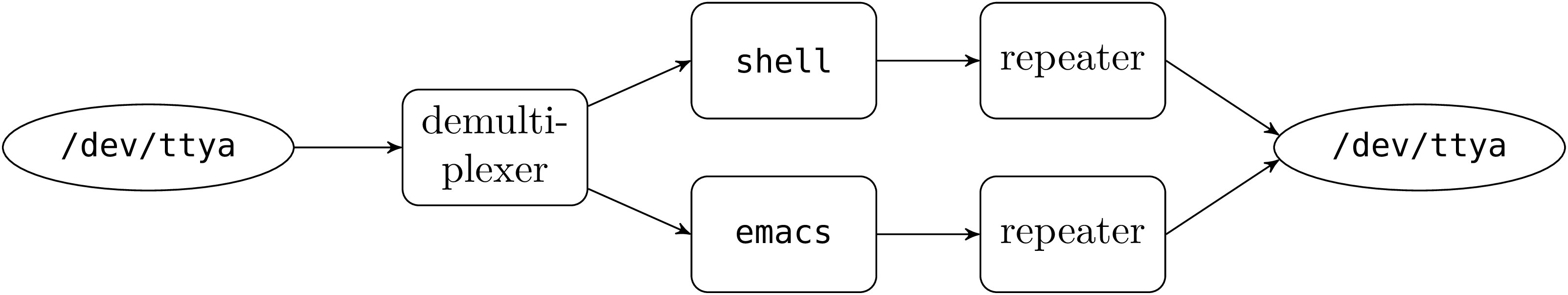

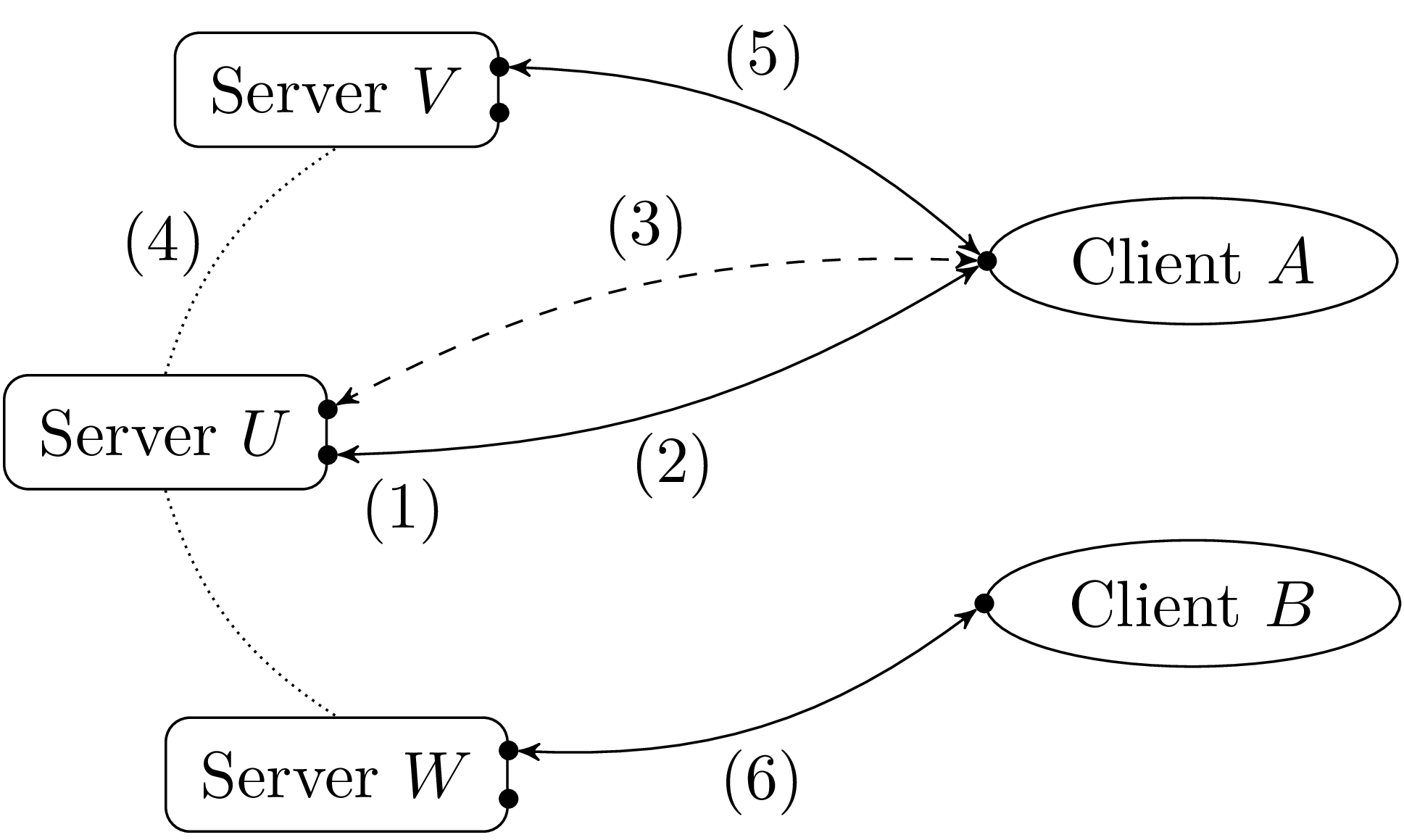

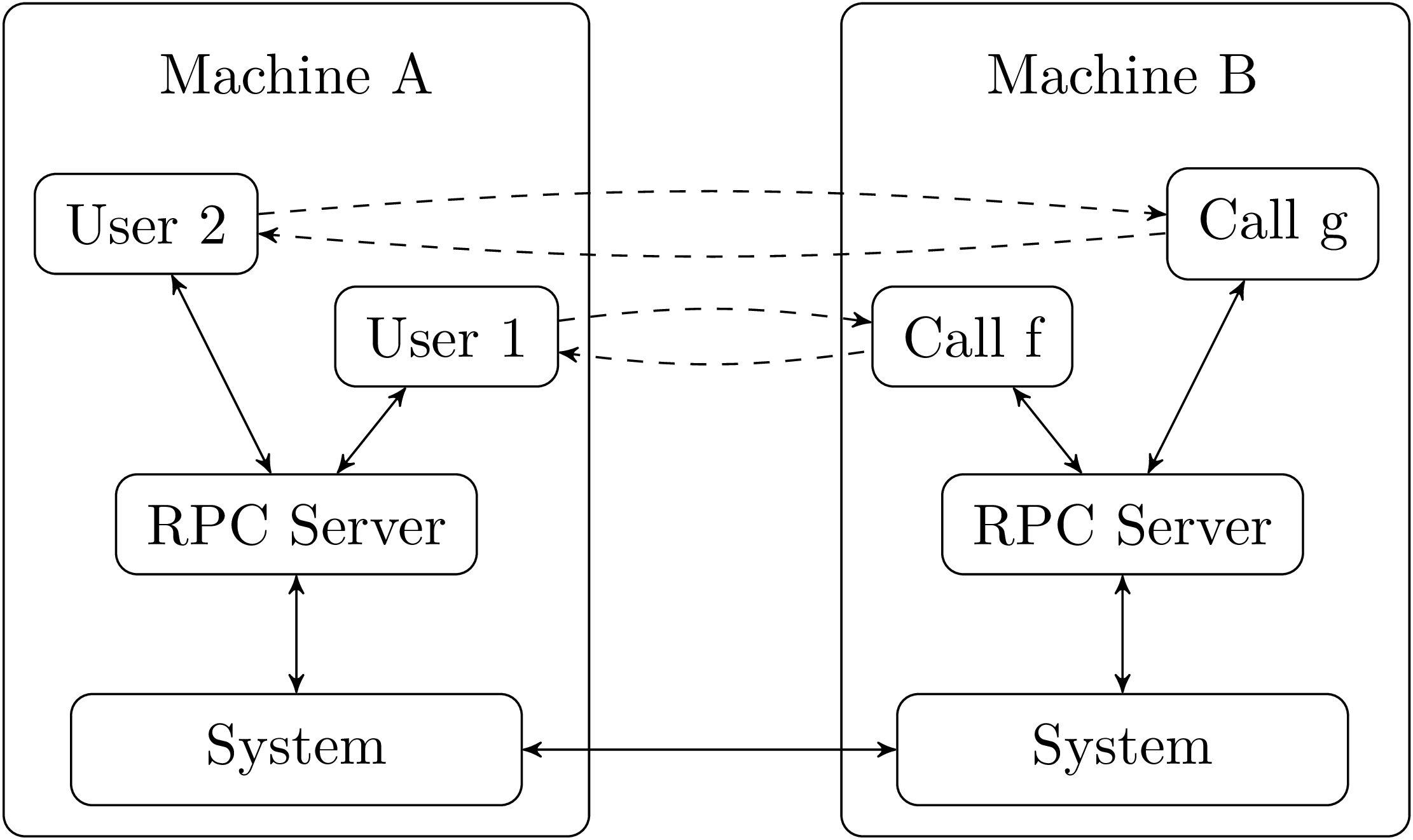

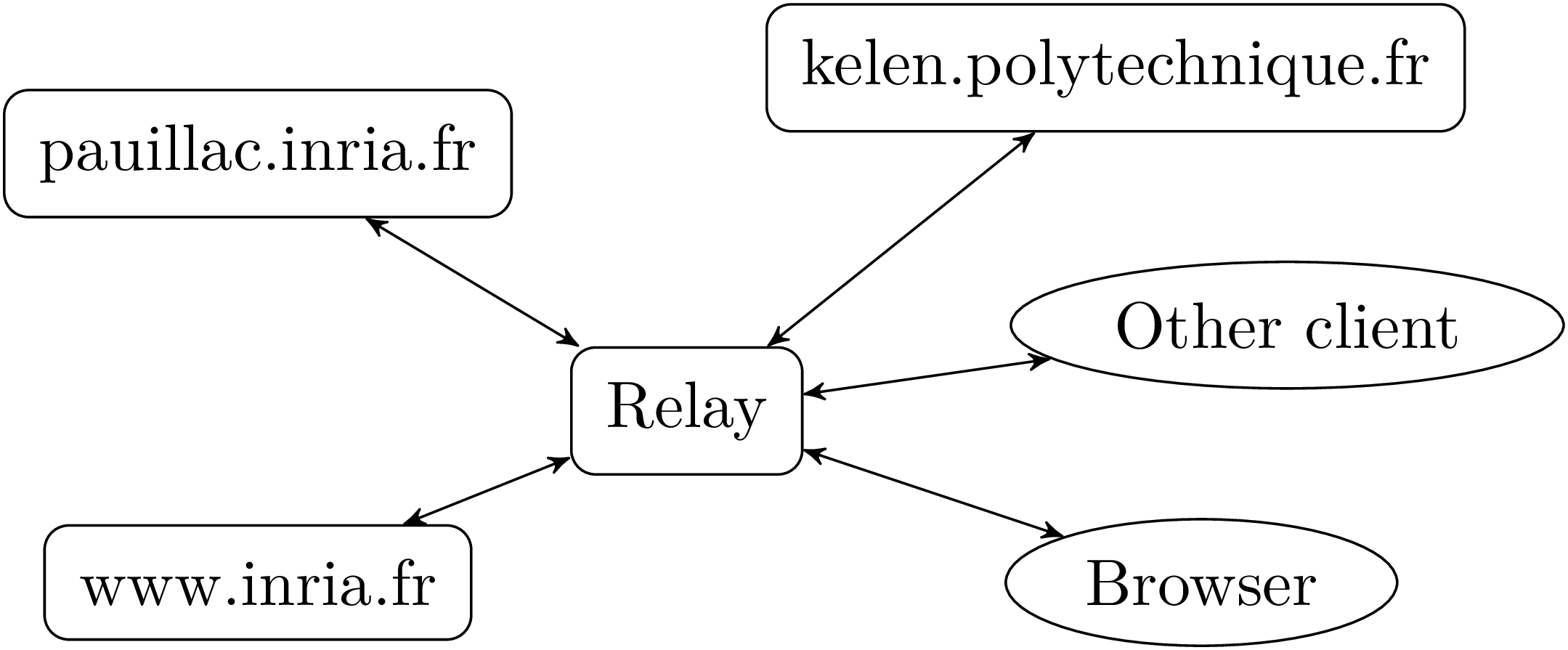

* * *